- Information Technology

- Data Infrastructure

- Data Tools

- Desktops, Laptops and OS

- Chip Sets

- Collaboration Tools

- Desktop Systems - PCs

- Email Client

- Embedded Systems

- Hardware and Periferals

- Laptops

- Linux - Open Source

- Mac OS

- Memory Components

- Mobile Devices

- Presentation Software

- Processors

- Spreadsheets

- Thin Clients

- Upgrades and Migration

- Windows 7

- Windows Vista

- Windows XP

- Word Processing

- Workstations

- Enterprise Applications

- IT Infrastructure

- IT Management

- Networking and Communications

- Bluetooth

- DSL

- GPS

- GSM

- Industry Standard Protocols

- LAN - WAN

- Management

- Mobile - Wireless Communications

- Network

- Network Administration

- Network Design

- Network Disaster Recovery

- Network Interface Cards

- Network Operating Systems

- PBX

- RFID

- Scalability

- TCP - IP

- Telecom Hardware

- Telecom Regulation

- Telecom Services

- Telephony Architecture

- Unified Communications

- VPNs

- VoIP - IP Telephony

- Voice Mail

- WAP

- Wi-Fi (802.11)

- WiMAX (802.16)

- Wide Area Networks (WAN)

- Wireless Internet

- Wireless LAN

- Security

- Servers and Server OS

- Software and Web Development

- .Net Framework

- ASPs

- Application Development

- Application Servers

- Collaboration

- Component-Based

- Content Management

- E-Commerce - E-Business

- Enterprise Applications

- HTML

- IM

- IP Technologies

- Integration

- Internet

- Intranet

- J2EE

- Java

- Middleware

- Open Source

- Programming Languages

- Quality Assurance

- SAAS

- Service-Oriented Architecture (SOA)

- Software Engineering

- Software and Development

- Web Design

- Web Design and Development

- Web Development and Technology

- XML

- Storage

- Agriculture

- Automotive

- Career

- Construction

- Education

- Engineering

- Broadcast Engineering

- Chemical

- Civil and Environmental

- Control Engineering

- Design Engineering

- Electrical Engineering

- GIS

- General Engineering

- Industrial Engineering

- Manufacturing Engineering

- Materials Science

- Mechanical Engineering

- Medical Devices

- Photonics

- Power Engineering

- Process Engineering

- Test and Measurement

- Finance

- Food and Beverage

- Government

- Healthcare and Medical

- Human Resources

- Information Technology

- Data Infrastructure

- Data Tools

- Desktops, Laptops and OS

- Chip Sets

- Collaboration Tools

- Desktop Systems - PCs

- Email Client

- Embedded Systems

- Hardware and Periferals

- Laptops

- Linux - Open Source

- Mac OS

- Memory Components

- Mobile Devices

- Presentation Software

- Processors

- Spreadsheets

- Thin Clients

- Upgrades and Migration

- Windows 7

- Windows Vista

- Windows XP

- Word Processing

- Workstations

- Enterprise Applications

- IT Infrastructure

- IT Management

- Networking and Communications

- Bluetooth

- DSL

- GPS

- GSM

- Industry Standard Protocols

- LAN - WAN

- Management

- Mobile - Wireless Communications

- Network

- Network Administration

- Network Design

- Network Disaster Recovery

- Network Interface Cards

- Network Operating Systems

- PBX

- RFID

- Scalability

- TCP - IP

- Telecom Hardware

- Telecom Regulation

- Telecom Services

- Telephony Architecture

- Unified Communications

- VPNs

- VoIP - IP Telephony

- Voice Mail

- WAP

- Wi-Fi (802.11)

- WiMAX (802.16)

- Wide Area Networks (WAN)

- Wireless Internet

- Wireless LAN

- Security

- Servers and Server OS

- Software and Web Development

- .Net Framework

- ASPs

- Application Development

- Application Servers

- Collaboration

- Component-Based

- Content Management

- E-Commerce - E-Business

- Enterprise Applications

- HTML

- IM

- IP Technologies

- Integration

- Internet

- Intranet

- J2EE

- Java

- Middleware

- Open Source

- Programming Languages

- Quality Assurance

- SAAS

- Service-Oriented Architecture (SOA)

- Software Engineering

- Software and Development

- Web Design

- Web Design and Development

- Web Development and Technology

- XML

- Storage

- Life Sciences

- Lifestyle

- Management

- Manufacturing

- Marketing

- Meetings and Travel

- Multimedia

- Operations

- Retail

- Sales

- Trade/Professional Services

- Utility and Energy

- View All Topics

Share Your Content with Us

on TradePub.com for readers like you. LEARN MORE

Request Your Free White Paper Now:

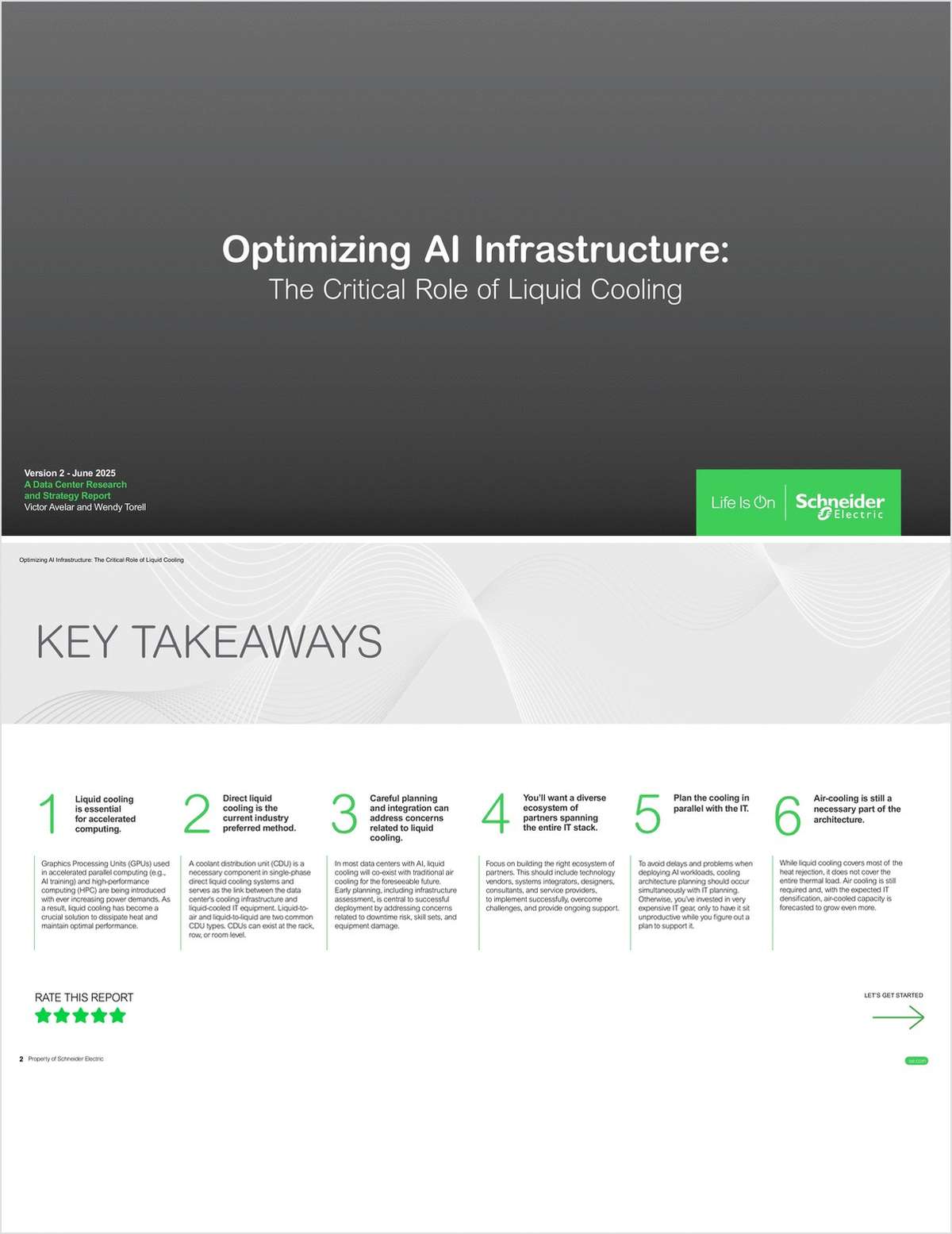

"Optimizing AI Infrastructure The Critical Role of Liquid Cooling"

GPUs driving AI workloads produce heat that challenges air cooling. Direct liquid cooling with cold plates and coolant units efficiently manages thermal loads while preserving rack density. Organizations must plan carefully to address timelines, expertise, downtime, and warranties. Download the white paper for insights.

AI advancements, especially GPU-powered workloads, are pushing data centers beyond air cooling limits. With GPUs consuming up to 1,400 watts, organizations face challenges in heat management and efficiency.

This white paper highlights direct liquid cooling as the preferred solution for AI infrastructure, addressing concerns like downtime risk, equipment damage, and skill requirements. Key insights include:

· Coolant distribution units (CDUs) and their role in heat transfer

· Evaluating infrastructure compatibility with liquid cooling

· Best practices for hybrid cooling environments

Discover how planning and the right ecosystem enable successful liquid cooling for AI workloads.

Offered Free by: Schneider Electric

See All Resources from: Schneider Electric