- Information Technology

- Data Infrastructure

- Data Tools

- Desktops, Laptops and OS

- Chip Sets

- Collaboration Tools

- Desktop Systems - PCs

- Email Client

- Embedded Systems

- Hardware and Periferals

- Laptops

- Linux - Open Source

- Mac OS

- Memory Components

- Mobile Devices

- Presentation Software

- Processors

- Spreadsheets

- Thin Clients

- Upgrades and Migration

- Windows 7

- Windows Vista

- Windows XP

- Word Processing

- Workstations

- Enterprise Applications

- IT Infrastructure

- IT Management

- Networking and Communications

- Bluetooth

- DSL

- GPS

- GSM

- Industry Standard Protocols

- LAN - WAN

- Management

- Mobile - Wireless Communications

- Network

- Network Administration

- Network Design

- Network Disaster Recovery

- Network Interface Cards

- Network Operating Systems

- PBX

- RFID

- Scalability

- TCP - IP

- Telecom Hardware

- Telecom Regulation

- Telecom Services

- Telephony Architecture

- Unified Communications

- VPNs

- VoIP - IP Telephony

- Voice Mail

- WAP

- Wi-Fi (802.11)

- WiMAX (802.16)

- Wide Area Networks (WAN)

- Wireless Internet

- Wireless LAN

- Security

- Servers and Server OS

- Software and Web Development

- .Net Framework

- ASPs

- Application Development

- Application Servers

- Collaboration

- Component-Based

- Content Management

- E-Commerce - E-Business

- Enterprise Applications

- HTML

- IM

- IP Technologies

- Integration

- Internet

- Intranet

- J2EE

- Java

- Middleware

- Open Source

- Programming Languages

- Quality Assurance

- SAAS

- Service-Oriented Architecture (SOA)

- Software Engineering

- Software and Development

- Web Design

- Web Design and Development

- Web Development and Technology

- XML

- Storage

- Agriculture

- Automotive

- Career

- Construction

- Education

- Engineering

- Broadcast Engineering

- Chemical

- Civil and Environmental

- Control Engineering

- Design Engineering

- Electrical Engineering

- GIS

- General Engineering

- Industrial Engineering

- Manufacturing Engineering

- Materials Science

- Mechanical Engineering

- Medical Devices

- Photonics

- Power Engineering

- Process Engineering

- Test and Measurement

- Finance

- Food and Beverage

- Government

- Healthcare and Medical

- Human Resources

- Information Technology

- Data Infrastructure

- Data Tools

- Desktops, Laptops and OS

- Chip Sets

- Collaboration Tools

- Desktop Systems - PCs

- Email Client

- Embedded Systems

- Hardware and Periferals

- Laptops

- Linux - Open Source

- Mac OS

- Memory Components

- Mobile Devices

- Presentation Software

- Processors

- Spreadsheets

- Thin Clients

- Upgrades and Migration

- Windows 7

- Windows Vista

- Windows XP

- Word Processing

- Workstations

- Enterprise Applications

- IT Infrastructure

- IT Management

- Networking and Communications

- Bluetooth

- DSL

- GPS

- GSM

- Industry Standard Protocols

- LAN - WAN

- Management

- Mobile - Wireless Communications

- Network

- Network Administration

- Network Design

- Network Disaster Recovery

- Network Interface Cards

- Network Operating Systems

- PBX

- RFID

- Scalability

- TCP - IP

- Telecom Hardware

- Telecom Regulation

- Telecom Services

- Telephony Architecture

- Unified Communications

- VPNs

- VoIP - IP Telephony

- Voice Mail

- WAP

- Wi-Fi (802.11)

- WiMAX (802.16)

- Wide Area Networks (WAN)

- Wireless Internet

- Wireless LAN

- Security

- Servers and Server OS

- Software and Web Development

- .Net Framework

- ASPs

- Application Development

- Application Servers

- Collaboration

- Component-Based

- Content Management

- E-Commerce - E-Business

- Enterprise Applications

- HTML

- IM

- IP Technologies

- Integration

- Internet

- Intranet

- J2EE

- Java

- Middleware

- Open Source

- Programming Languages

- Quality Assurance

- SAAS

- Service-Oriented Architecture (SOA)

- Software Engineering

- Software and Development

- Web Design

- Web Design and Development

- Web Development and Technology

- XML

- Storage

- Life Sciences

- Lifestyle

- Management

- Manufacturing

- Marketing

- Meetings and Travel

- Multimedia

- Operations

- Retail

- Sales

- Trade/Professional Services

- Utility and Energy

- View All Topics

Share Your Content with Us

on TradePub.com for readers like you. LEARN MORE

Request Your Free Product Overview Now:

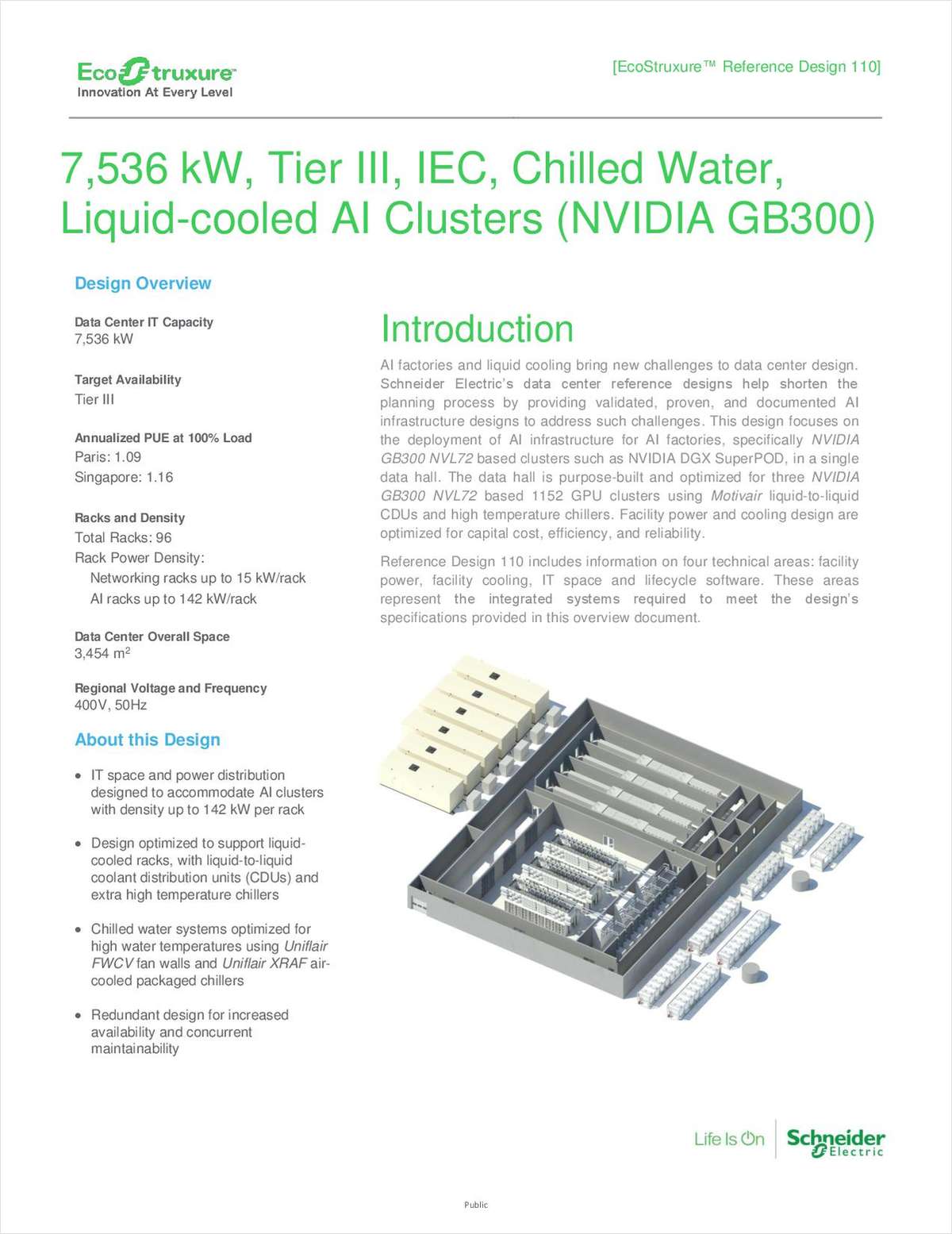

"7,536 kW, Tier III, IEC, Chilled Water, Liquid-cooled AI Clusters"

This design tackles AI infrastructure challenges by optimizing power and cooling for GPU clusters up to 142 kW per rack. The Tier III setup achieves a PUE of 1.09-1.16 via liquid cooling, redundancy, and lifecycle management software. Review this overview for full technical specifications.

AI factories and liquid cooling add complexity to data center planning. High-density AI clusters, reaching up to 142 kW per rack, demand purpose-built electrical and cooling systems that exceed traditional limits.

This reference design provides a validated blueprint for deploying NVIDIA GB300 NVL72-based AI clusters in a 7,536 kW Tier III facility. It features:

· Distributed redundant power with 3+1 UPS for extreme rack densities

· Dual-loop cooling with chilled water fan walls and liquid-to-liquid CDUs

· Lifecycle software for safety analysis, CFD modeling, and monitoring

Learn how this engineered approach accelerates AI infrastructure deployment in the full product overview.

Offered Free by: Schneider Electric

See All Resources from: Schneider Electric